How to Run an AI Brand Audit: A Step-by-Step Guide

AI Brand Report ·

- AI Visibility

- Brand Strategy

Learn how to run an AI brand audit that measures brand visibility in ChatGPT, Gemini, Claude, Grok, and Perplexity, then turns findings into a practical action plan.

Most brands have never audited what AI systems say about them. Not because they don't care, but because until recently there wasn't a clear process for doing it. If your team wants to understand brand visibility in ChatGPT, Gemini, Claude, Perplexity, Grok, and other AI answer engines, an AI brand audit is the place to start.

That's changed. Running a comprehensive AI brand audit is now a structured, repeatable discipline. And for brands serious about their presence in AI-generated answers, it's become as foundational as a traditional SEO audit or a competitive analysis.

Definition: An AI brand audit is a structured review of how AI systems describe, cite, compare, and recommend your brand across the prompts your buyers are most likely to ask. It combines AI visibility audit, AI search audit, AI reputation audit, and competitive share-of-voice analysis into one repeatable process.

An AI brand audit answers the questions that increasingly determine whether prospects discover your brand at all: Does your brand appear in AI responses to relevant queries? When it appears, is it described accurately? How does your AI presence compare to competitors? What signals are driving any gaps?

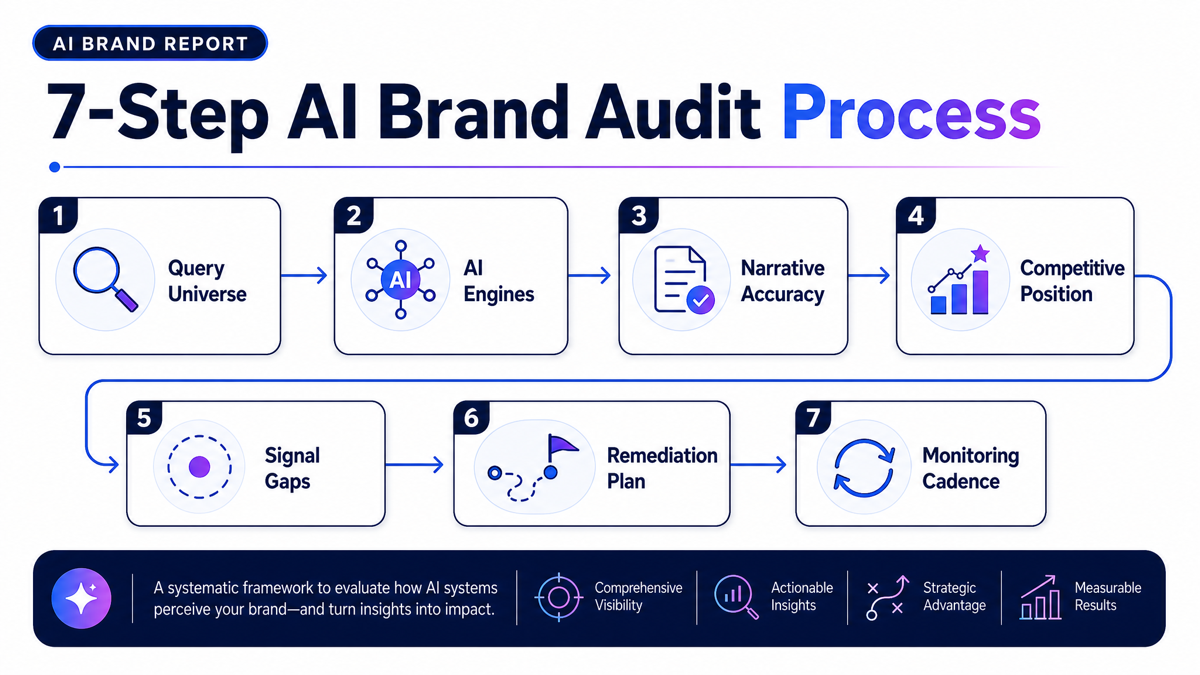

This guide walks you through exactly how to run an AI brand audit and turn the findings into a practical action plan.

What an AI Brand Audit Actually Is

An AI brand audit is a systematic evaluation of how your brand is described, positioned, and recommended across major AI systems. It answers four core questions:

- Presence - Does your brand appear in AI responses to queries relevant to your category?

- Accuracy - When it appears, is it described correctly?

- Sentiment - Is the framing positive, neutral, or negative?

- Competitive Position - How does your AI visibility compare to competitors across the same queries?

Think of it as a reputation audit for the AI era - one that captures what potential customers are learning about your brand before they ever visit your website. The findings from an audit feed directly into your brand narrative engineering strategy and your ongoing AI brand monitoring program.

Step 1: Define Your Query Universe

Your query universe is the set of prompts that represent how real customers research your category. These should be conversational, specific, and varied - reflecting the actual questions buyers ask AI assistants during their research process.

Build your query set across four types:

- Category queries - "What are the best [your category] platforms for [your target use case]?"

- Comparison queries - "How does [your brand] compare to [your top competitor]?"

- Problem queries - "What's the best way to solve [the problem your product solves]?"

- Reputation queries - "What do users think of [your brand]? Is it worth it?"

Aim for 20–30 queries to start. This gives you enough surface area to identify patterns without creating an unmanageable audit process. Prioritize the queries that represent the highest purchase intent - the questions buyers ask closest to a decision.

Step 2: Run Queries Across All Five Major AI Engines

This is where most brands stop short: they check one engine and call it done. That's insufficient. Each AI engine draws on different data sources and produces meaningfully different results. A brand that appears prominently in ChatGPT responses may be largely absent from Gemini - and the distribution of your actual customers across those platforms may not favor the engine where you perform best.

Run every query on:

- ChatGPT (OpenAI)

- Gemini (Google)

- Claude (Anthropic)

- Grok (xAI)

- Perplexity

For each response, capture:

- Whether your brand was mentioned

- Where in the response it appeared - first, second, briefly mentioned at the end, not at all

- The exact language used to describe your brand

- Which competitors appeared in the same response

- Any sources cited (particularly relevant for Perplexity, which surfaces citations explicitly)

Document everything in a structured format - a spreadsheet with rows for each query and columns for each engine is the minimum viable approach. You need to be able to compare patterns across queries and across engines.

Step 3: Evaluate Narrative Accuracy

For every response where your brand appears, assess whether the description is accurate. Check specifically for:

- Outdated product features - Is the AI describing functionality you've deprecated or significantly changed?

- Incorrect positioning - Are you being framed as a solution for a market segment you don't actually serve?

- Missing differentiators - Are your key competitive advantages absent from the AI's description?

- Wrong pricing tier - Is the AI suggesting you're more expensive or cheaper than you are?

- Incorrect company details - Do basic factual details check out?

Document every inaccuracy. These become your remediation list - and they indicate which signals in the information ecosystem are misleading AI systems about your brand.

Inaccurate AI descriptions are not passive problems. They actively shape prospect perception before any human interaction occurs, and without monitoring, they can persist for months before anyone on your team notices.

Step 4: Score Your Competitive Position

For each query, note which competitors appeared alongside you and how frequently each appeared across your full query set. This gives you a rough AI share of voice comparison - the metric that frames your visibility not in absolute terms but as a competitive position.

You're looking for:

- Which competitors appear more frequently than you across the same queries?

- Are there specific query types where you're consistently absent but competitors are present?

- How are competitors described relative to you - are there framing advantages they consistently receive?

This competitive map tells you where your AI visibility gaps are most significant and which competitors are winning the discovery moments you're losing. For a deeper treatment of this metric, see AI share of voice.

Step 5: Identify Your Signal Gaps

For every area where your brand underperforms - absent from certain queries, described inaccurately, outperformed by competitors - trace the issue to a root cause. The most common culprits:

Thin third-party coverage. Not enough independent mentions in authoritative sources. If the only substantial descriptions of your brand come from your own website, AI systems don't have enough independent validation to recommend you with confidence.

Inconsistent messaging. Different sources describe your brand differently, creating a fragmented AI narrative. Your website says one thing; industry press says another; directories say a third thing. AI systems synthesize these signals - and when they conflict, confidence drops.

Outdated content. The most authoritative sources about your brand are old. AI systems weight recency; a brand whose most significant coverage is from three years ago loses to a competitor with fresh, detailed coverage from the past six months.

Missing category signals. Your brand isn't clearly and repeatedly associated with the right category across enough independent sources. How AI assistants decide which brands to recommend depends heavily on this category association signal.

Review gaps. Thin or mixed review presence across major platforms deprives AI systems of the social proof signals they use to validate recommendations.

Step 6: Build Your Remediation Plan

Turn your findings into a prioritized action list. Not all gaps are equally important - prioritize based on the query types that represent the highest purchase intent, and the fixes that are most achievable in the near term.

A typical remediation plan includes:

- Content to create or update - Depth content, comparison pages, use-case guides, and updated product descriptions that reflect current positioning

- PR and media targets to pursue - Specific publications and formats that would create authoritative third-party signals in your category

- Review platforms to strengthen - Where to focus review generation effort for maximum AI signal impact

- Structured data to implement or correct - Schema markup that gives AI systems direct, machine-readable information about your brand

- Third-party listings and directories to update - Ensuring your brand is accurately and completely described in the sources AI systems draw on most heavily

Prioritize ruthlessly. A 10-point plan that never gets executed is less valuable than three well-chosen actions carried out consistently.

Step 7: Establish a Monitoring Cadence

A one-time audit is a snapshot. The AI visibility landscape changes continuously - competitors publish content, new signals emerge, and models update their understanding. Your audit needs to be repeated on a regular cadence.

Monthly is the minimum for most brands. Weekly is advisable in competitive categories where the difference between appearing and not appearing in AI recommendations translates directly to pipeline.

This is where systematic tools become necessary. Running a full manual audit across five engines and 25 queries - then comparing results against a previous period - is genuinely time-consuming. Doing it every month across a competitive landscape is impractical without automation.

Turning Audit Findings Into Ongoing Strategy

The AI brand audit is not a project you complete and archive. It's the foundation of an ongoing AI visibility strategy. The patterns you identify through regular audits tell you:

- Which content investments are moving the needle

- Where competitor activity is threatening your position

- Whether narrative fixes are propagating through the information ecosystem

- Which AI engines need specific attention

Brands that audit once and act once will see improvements plateau. Brands that audit regularly, adapt continuously, and treat AI visibility as an ongoing strategic discipline are the ones that build durable, defensible positions in AI-generated recommendations.

AI Brand Audit Checklist

Use this AI brand audit checklist as a practical working version of the process:

- Define 20–30 high-intent buyer prompts across category, comparison, problem, and reputation queries.

- Run each prompt across ChatGPT, Gemini, Claude, Grok, and Perplexity.

- Record whether your brand appears, where it appears, how it is described, and which sources are cited.

- Capture competitor mentions for the same prompts so you can calculate relative AI share of voice.

- Flag inaccurate, outdated, incomplete, or off-position brand descriptions.

- Group findings into signal gaps, including thin third-party coverage, inconsistent messaging, outdated content, missing category signals, and review gaps.

- Prioritize remediation work based on revenue impact, purchase intent, and feasibility.

- Repeat the audit monthly, or weekly during launches, PR pushes, or competitive campaigns.

Key Takeaways

- An AI brand audit answers four questions: presence, accuracy, sentiment, and competitive position

- Run every query across all five major AI engines - results differ significantly between platforms

- Document not just whether your brand appears, but exactly how it's described and how that compares to your intended positioning

- Map competitor performance across the same query set to understand your relative AI share of voice

- Trace underperformance to root causes: thin coverage, inconsistent messaging, outdated content, missing category signals, or review gaps

- Build a prioritized remediation plan and establish a regular monitoring cadence - a one-time audit produces a snapshot, not a strategy

Frequently Asked Questions

What is an AI brand audit?

An AI brand audit is a structured review of how AI systems describe, cite, compare, and recommend your brand across important buyer prompts. It helps teams understand whether AI systems can accurately connect the brand to the right category, use cases, differentiators, and competitors.

What should you check first?

Start by testing the prompts your buyers are most likely to ask. Look for whether your brand appears, how it is described, which competitors appear beside it, and whether the answer points to credible sources.

How often should you run an AI brand audit?

Monthly is the minimum useful cadence for most teams. Weekly reviews make sense during launches, competitive campaigns, PR activity, or any period where AI-generated answers could shift quickly.

How can AI Brand Report help?

AI Brand Report runs structured prompt checks across major AI engines, tracks brand and competitor visibility, and turns the results into a prioritized list of actions so your team can improve how AI systems describe and recommend your brand.

Check Your AI Visibility

If you want to see how AI systems describe and recommend your brand today, start with a free AI visibility report. AI Brand Report checks your presence across major AI engines, compares your visibility against competitors, and highlights the gaps most worth fixing first.

Get your free AI visibility report.

Related Articles

- AI Brand Visibility: The Complete Guide To Being Recommended By AI Systems - The comprehensive framework for understanding and managing your AI presence

- AI Brand Monitoring - How to systematically track your brand across AI systems after the initial audit

- AI Share of Voice: What It Is and How to Win It - How to measure and improve your competitive position in AI-generated recommendations

- Brand Narrative Engineering For AI Systems - How to fix the signal gaps your audit reveals

- Structured Data For AI Visibility - Technical foundations for ensuring AI systems understand your brand accurately

- How AI Assistants Choose Which Brands To Recommend - The specific signals AI systems evaluate - essential context for interpreting audit findings